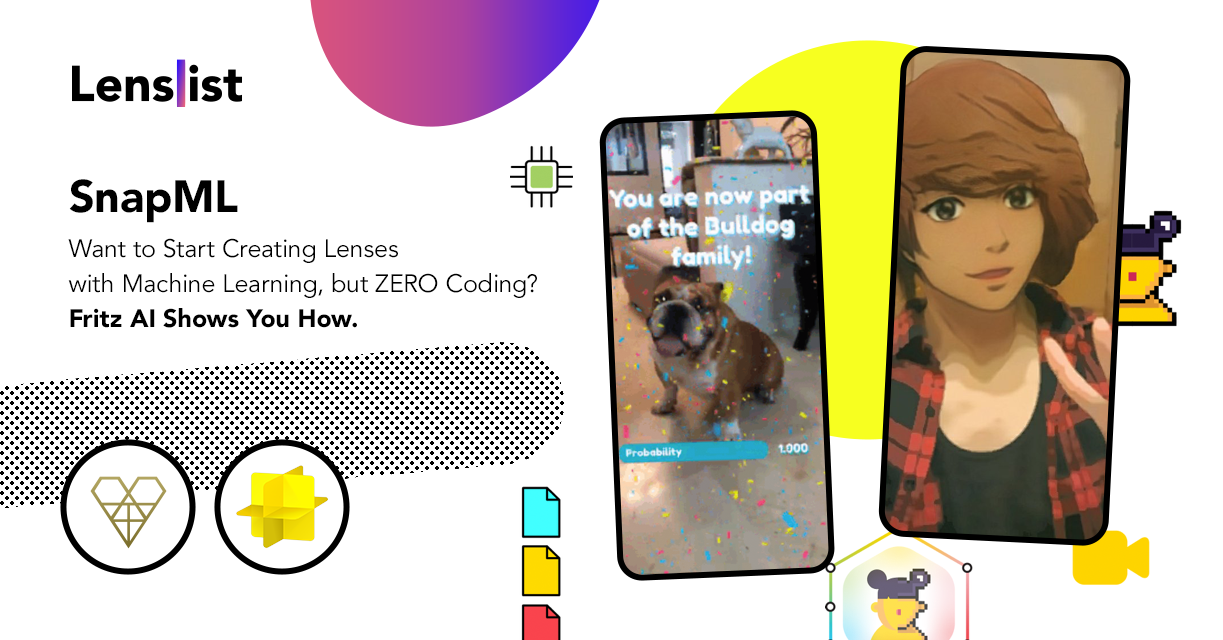

SnapML – Want to Start Creating Lenses with Machine Learning, but ZERO Coding? Fritz AI Shows You How!

If you’re a Lens Creator, who hasn’t focused on the subject of machine learning yet, you might be surprised to learn that you have probably already used it without realizing it. Segmentation, skeletal tracking, and other Lens Studio features utilize ML to make your Lenses even more advanced and interactive 😎🕺

The power of SnapML

What’s more, some of this year’s most viral, popular Lenses were the ones utilizing machine learning. A great example of how powerful SnapML can be is the Anime Lens published by Snapchat back in September. The whole Internet was talking about it and it was so successful that Snapchat noted it as one of the highlights while publishing their Third Quarter 2020 Financial Results. According to this document, ‘Anime Style’ Lens powered by real time machine learning was engaged with 3 billion times in its first week 😮

Anime Style

by Snapchat

Machine learning – seems hard, but is it really?

There’s no question – machine learning brings amazing opportunities to the Creators community and actually to Snapchat itself. So, one might ask…Why isn’t there more SnapML Lenses? Machine learning is a super advanced technology, enabling a whole variety of use cases – from training algorithms and developing robots to producing self-driving cars. Pretty intimidating, isn’t it? No worries, when you put the Snapchat camera and ML together, everything seems…more possible. Lens Studio provided us with SnapML Templates, more ML Templates (foot tracking included) and…another Model Zoo to convince you! 😄

User-friendly ML by Fritz AI

In case this was not encouraging enough, there is a no-code, user-friendly solution you should definitely try. With Fritz AI you can easily build custom ML models ready for Lens Studio – no machine learning expertise required. We talked with Dan Abdinoor, CEO and Cofounder of Fritz AI and asked him how they came up with their product and how you can get started with their software 👇

When did you come up with combining your product and skills with the tools provided by Lens Studio? 👻

Dan: We understand the transformative nature of machine learning, especially when applied to mobile experiences. But we also understand that there are significant barriers to entry—that’s why we were one of the first startups to create a product that makes ML more accessible and approachable for those who don’t have an ML or data science background.

So when the Snap team introduced SnapML earlier this year, we saw it as the perfect fit for our technology and our mission—a new mobile machine learning platform that can instantly reach millions of people around the globe. We knew immediately that we wanted to give AR Creators the chance to truly access this technology, and so we jumped right in and built support in Fritz AI for SnapML projects.

How to get started with a Fritz AI x SnapML project? 🚀

Dan: Fritz AI is a webapp, so there’s no additional software that you need to download or install.

When it comes to SnapML, there are two ways to get started with Fritz AI. First, we have a growing collection of pre-built project templates. With these, you can simply download a template Lens Studio project—with the ML model hooked up and preview images loaded in Lens Studio—and start creating without worrying about the ML side of things at all. This is the quickest way to jump in.

If you’re looking for something a bit more custom, you can train your own models and export them as Lens Studio projects—no code involved. You can even generate large amounts of data with Fritz AI if you don’t have your own ready-to-train dataset. So you can come to Fritz AI with simply an idea, and our platform helps you turn it into a reality, eliminating common roadblocks along the way.

What kind of use cases do you see for your product in terms of offering these solutions to brands/clients Creators might have? 🤔

Dan: Fritz AI allows Creators to make pretty much anything they can dream up in Lens Studio. Right now, we see that Creators are limited by the template projects Snapchat offers, their own limited ML expertise, or both. With the ability to create custom models without the need for code or ML expertise, Creators are now only limited by their imaginations.

Currently, we offer the following model and project types:

Style Transfer

Take the style of one image and apply its visual patterns to the content of another image/video stream. This is a great way to reimagine famous works of art or experiment with a variety of color and style palettes.

Image Labeling

Simply predicts labels for what is seen within an image or video frame. These project types are best for triggering global AR effects. For example, you might have an image labeling experience that understands you’re looking at a beautiful dinner table topped with food.

Object Detection

Object detection takes things a step further than Image Labeling by seeing specific objects in the scene. If you want to anchor an AR effect to an object as it moves through a scene, then Object Detection is a good model type experiment with. Consider the dinner table example above—with object detection, you could locate a turkey on a Thanksgiving table, rather than just recognize the table of food as a whole.

Segmentation

Segmentation models produce pixel-level masks for green-screen-like effects or photo composites. Segmentation can work on just about anything: a person’s hair, the sky, a park bench—whatever you can dream up, really.

More info on Fritz AI soon on Lenslist

Since we really want to see more ML-powered Lenses, we will come back with an more in-depth article on SnapML and Fritz AI, where we will walk you through the software itself! Until then, try it yourself – Fritz AI for Snapchat Lens Creators is free to use while in beta 😉