Snapchat Announces Major Updates, Introduces Lens Studio 3.0 and Gives Creators Even More Freedom

The new version of Lens Studio comes with a new look and new templates including those using Machine Learning, like hand gestures, foot tracking or custom segmentation. But the real game-changer is a feature called SnapML: it gives creators unprecedented freedom by enabling them to add their own ML models to enhance their lenses.

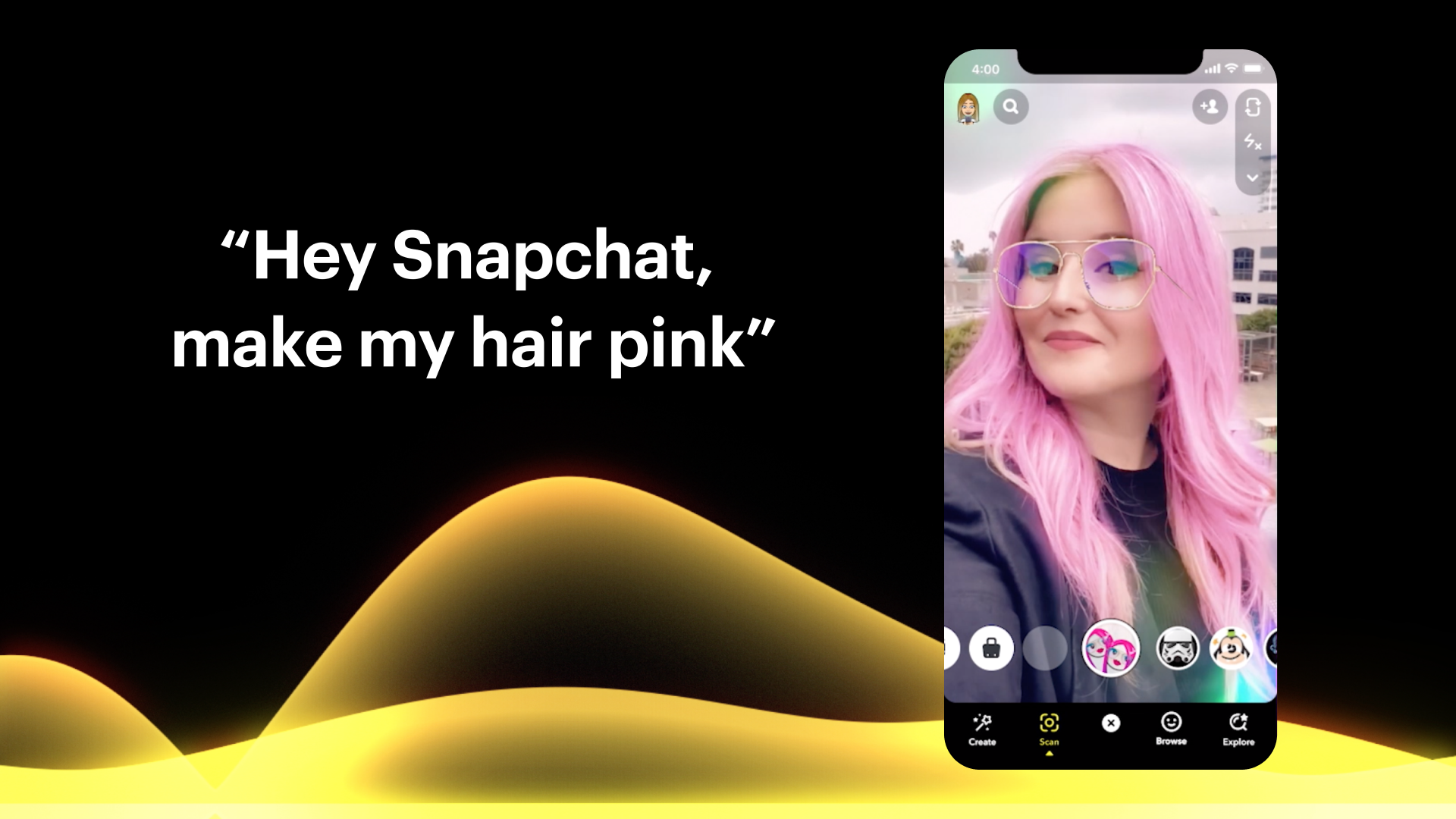

Besides the update of Lens Studio, Snapchat introduced a few AR-focused improvements that should be interesting for both marketers and developers. Voice Scan is a feature which recognizes voice commands to search for specific lenses. As it was presented during the Snap Partner Summit, you can for example ask Snapchat to show you lenses that will turn your hair pink. Camera Kit is an another new functionality that brings the power of Snap Camera into the custom IOS or Android apps and lets you create unique AR experiences outside of Snapchat (read more).

Source: snapchat.com

The features announced but yet to come to Lens Studio are Local Lenses – an improvement on Landmarkers. See the video below to wrap your head around this amazing idea:

What exactly is SnapML?

Machine Learning has already been used in some of the Lens Studio templates. ML expands AR capabilities and makes lenses even more fun. With the latest update, Snapchat introduced some new exciting templates that use ML, but what’s amazing is the ability for creators to add new features to Snapchat lenses themselves. In fact, by importing ML models to Lens Studio, creators gain almost unlimited flexibility in developing lenses. To show the new potential hiding behind SnapML, Snapchat cooperated with Wannaby, AR company which developed the first mobile app to try-on sneakers in AR.

Foot Tracking by Wannaby

Foot Tracking Template is available for every user of Lens Studio. But, it was not developed by the Snapchat Team! The author is Wannaby, an external developer that had a chance to use SnapML before it was released (read more).

Source: snapchat.com

Let’s take a minute to realize what it means: foot tracking, a long-awaited feature by both Instagram and Snapchat communities, is introduced not as a new capability by Snapchat developers, but by an outside company using just Lens Studio & SnapML! It shows just how powerful the new feature is and a level of freedom it brings to lens creators and other AR developers

Selection of new templates

Lens Studio 3.0 also introduced some new exciting functionalities referred to as templates. We present to you some that we found especially noteworthy.

Hand gestures

Finally, lenses can react to different hand poses and gestures to trigger AR effects!

Source: snapchat.com

Custom segmentation

Until now creators were limited to use segmentation to create masks on certain things objects the sky, hair or background. Now, thanks to ML, creators can add their own models to use segmentation within them. Exemplary template lets users create a mask for pizza (read more).

Face expressions & landmarks

Snapchat introduced advancements for creating 2D and 3D lenses that track facial coordinates and gestures.

Source: snapchat.com

Ground segmentation

Modify the ground using masks imported with SnapML.

Source: snapchat.com

Classification

Use Machine Learning to “determine whether a thing of a certain class is detected or not and apply an effect based on this information” (read more). Snapchat Team added a template that recognizes if the user is wearing glasses as an example of a Classification ML model.

Source: snapchat.com

Multi-segmentation

Create masks for the ground, plant and sky and use them in lenses simultaneously.

Source: snapchat.com

Full list of Lens Studio new features on snapchat.com

Summing up, Lens Studio 3.0 is a huge step forward for social media AR. As Sergey Arkhangelskiy (Wannaby’s CEO) said during Snapchat Partners Summit 2020:

“SnapML empowers creators to bring any idea they have into Lens Studio and build lenses with it.“

Thanks for reading! Let us know in case you participate in any AR project using SnapML. We can’t wait for the first case studies from community 😊